— 6 reading minutes

We wrote this for Google Cloud, but it really applies to AWS as well or Azure, as they all have very similar services for what we want to do, but let's focus on Google Cloud which is what we use on a daily basis.

If you manage a certain infrastructure, have developments for multiple clients with test projects, pre-production machines, deployment with artefacts, etc., in the end the infrastructure bill will go up and can become a problem. In any case, even if it is not a problem and we can afford it, why pay extra for something that is easy to control. Everything we are going to show here is automatic and, once configured, there is hardly any need to follow it up (although I will also show how we can be sure if it has worked well or not).

Google Cloud Storage

Both for development and for storing artefacts, client files, etc., it is quite likely that we will use a service such as Cloud Storage or Amazon S3.

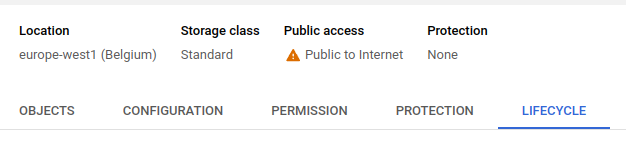

This is very common if we are going to have more than one machine serving an application, as we cannot have certain files shared locally and will have to have them on a distributed file system. Before we get to Production, it is very likely that we will have test systems, pre-production environments, files uploaded for deployment, etc. In this case, it is quite likely that, if we do nothing, these files will end up being stored forever (and we will pay for it), without any need to do so. Although the cost of S3 or Cloud Storage is very low, it does not cost anything to configure these folders so that they are deleted after a while. In both cases, both Amazon S3 and Cloud Storage call it lifecycle. Now I explain how to do it for Google and you can find how to do it for Amazon S3 here.

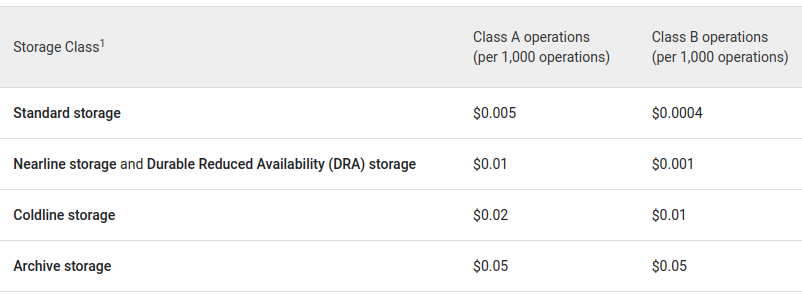

From here, new rules can be added and, in the case of the files mentioned above, the easiest way is to mark them for deletion after a certain period of time.

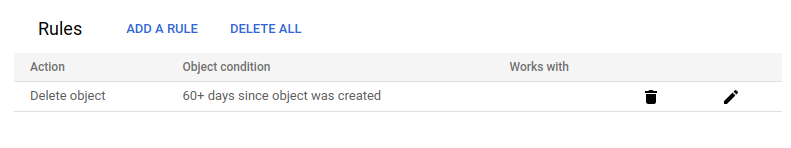

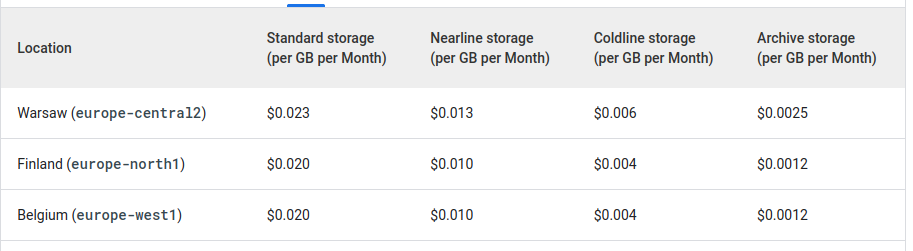

In this example we have deleted the files, after 60 days, but in some cases, such as logs or backups, we may want to change the storage system to one that is cheaper but which we will hardly ever access (this is important because, in cheaper storage, we pay much more for access to the data than in standard storage, in exchange for the information being much cheaper to store). This is best seen in the following two tables:

As I said, Archive is 10x cheaper than Standard, but it is also 10x more expensive to read or write the information in it, so it is designed for things that are going to remain untouched for a long time (hence the name). On the other hand, there is also a storage time commitment, in the case of Archive the data has to be stored for at least 365 days.

We have seen how to create the rules from the console, but we usually create the buckets by code or using Terraform or something similar. In these cases, the ideal is to define the Lifecycle rules directly in the creation of the bucket (or folder). To do this, we have to create a file with the rules configuration we want.

A simple json to delete objects after 60 days would be:

{

"lifecycle": {

"rule": [

{

"action": {

"type": "Delete"

},

"condition": {

"age": 60

}

}

]

}

}gcloud storage buckets update gs://BUCKET_NAME --lifecycle-file=LIFECYCLE_CONFIG_FILESavings on Compute Engine machines

The next thing is how to save on Compute Engine machines or deployments that we do to test things with customers, demos, deployments for our own developments that we are testing, and so on. For this there are several things, on the one hand the discounts for commitment of use and, on the other hand, the planning of instances or Instance Schedule.

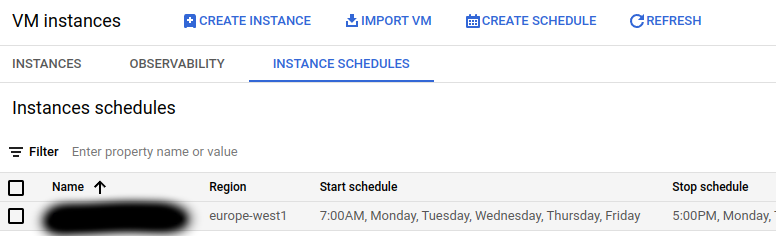

The instance scheduler allows us to turn instances on and off at certain times, on certain days of the week. The instances have to be in the same region as the scheduler.

In the example above, we turn the machines on at 7am and turn them off at 5pm, because this is a machine that we will only use during work and only on weekdays. In any case, obviously, if someone needs to use it outside those hours they can always turn it on manually.

The planner can connect to more than one instance, as long as, as mentioned above, they are in the same region.

With the above schedule alone, the machines would be off almost 70% of the time, with corresponding savings. Google will also charge us for the disk space taken up by the time they are off, but unless it is a very large disk it should not be a lot of money.

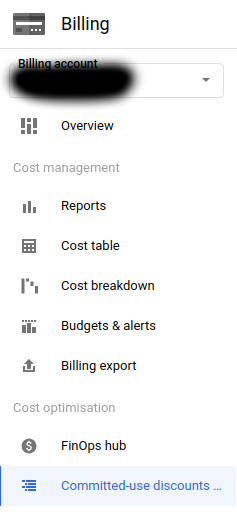

Discounts can be found under the Billing tab:

and allow us to contract commitments of use (for years) of machines in exchange for discounts. You can use Google's recommender to see the optimisation suggestions they give you. If you are in the middle of a change of infrastructure in any case, it is better to wait until you have finished changing everything and then with some time to review these recommendations and see if it makes sense to hire any.

AppEngine and CloudRun

An alternative for test deployments or services for occasional use is to use serverless infrastructure with the idea of paying only for the time we are using it. In terms of savings, there are a couple of things to keep in mind if we do it with this idea in mind (we are always talking about test infrastructure, services for occasional use, etc., not production).

Serverless architectures are normally defined with a scaling to allow them to increase or decrease depending on the traffic. In AppEngine by default the scaling is automatic, and if we look at the example they put on their website:

<appengine-web-app xmlns="http://appengine.google.com/ns/1.0"></appengine-web-app>

<application></application>simple-app</application>

<module></module>default</module>

<version></version>uno</version>

<threadsafe></threadsafe>true</threadsafe>

<instance-class></instance-class>F2</instance-class>

<automatic-scaling></automatic-scaling>

<target-cpu-utilization></target-cpu-utilization>0.65</target-cpu-utilization>

<min-instances></min-instances>5</min-instances>

<max-instances></max-instances>100</max-instances>

<max-concurrent-requests></max-concurrent-requests>50</max-concurrent-requests>

</automatic-scaling>

</appengine-web-app>In this example we would have a minimum of 5 instances always running. There is another parameter, although in this case it is not set, which is min-idle-instances to determine the minimum number of instances ready but not active, so that if there is a traffic peak, send part of it to those instances (and prepare others in between). To save money in deployments like the ones we were talking about, testing, etc, the ideal is to set both parameters to zero.

CloudRun is simpler because by default we will not have stopped instances waiting to receive requests.

In both cases the first request is slower, of course, because it will have to raise a new instance, but we save money, which is what it is all about in this case 😊.

Another point to take into account, in both cases, is the periodic executions (cron type or similar), if we are in a test environment or developing something new, take into account the configuration of periodic executions much less frequently than in Production, because otherwise we will be paying all the time for the instances that are raised to perform these executions.